Generative Visual Instruction Tuning

News Release Summary

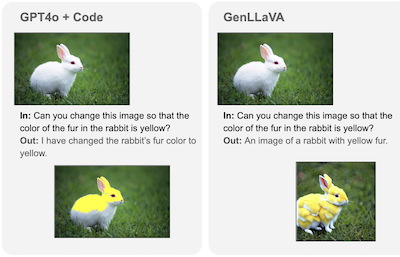

Researchers at Rice University and Google DeepMind have developed GenLLaVA, a multimodal AI system that can understand images, generate new pictures, and edit existing ones without losing performance in any single capability—a persistent challenge in the field. The team combined three existing AI models through a novel single-stage training approach using automatically generated instruction data from GPT-4V, rather than the traditional multi-stage process. Testing showed GenLLaVA outperformed similar models like GILL and Unified-IO 2 across visual understanding benchmarks while maintaining competitive image generation quality. This breakthrough demonstrates that AI systems can successfully balance multiple visual capabilities simultaneously, paving the way for more versatile digital assistants that could handle diverse visual tasks from answering

abstract

We propose to use automatically generated instruction-following data to improve the zero-shot capabilities of a large multimodal model with additional support for generative and image editing tasks. We achieve this by curating a new multimodal instruction-following set using GPT-4V and existing datasets for image generation and editing. Using this instruction set and the existing LLaVA-Finetune instruction set for visual understanding tasks, we produce GenLLaVA, a Generative Large Language and Visual Assistant. GenLLaVA is built through a strategy that combines three types of large pretrained models through instruction finetuning: Mistral for language modeling, SigLIP for image-text matching, and StableDiffusion for text-to-image generation. Our model demonstrates visual understanding capabilities superior to LLaVA and additionally demonstrates competitive results with native multimodal models such as Unified-IO 2, paving the way for building advanced general-purpose visual assistants by effectively re-using existing multimodal models. We open-source our dataset, codebase, and model checkpoints to foster further research and application in this domain.

details

citation

@article{hernandez2024generative,

title = {Generative Visual Instruction Tuning},

author = {Hernandez, Jefferson and Villegas, Ruben and Ordonez, Vicente},

year = {2024},

journal = {arXiv preprint arXiv:2406.11262},

url = {https://arxiv.org/abs/2406.11262},

}