PropTest: Automatic Property Testing for Improved Visual Programming

News Release Summary

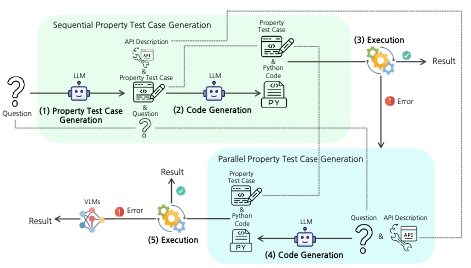

Researchers at Rice University and Columbia University have developed a method called PropTest that improves the reliability of AI systems that solve visual reasoning tasks by writing computer code. These "visual programming" systems work by using large language models to generate Python programs that answer questions about images, such as identifying objects or answering multi-step questions, but they frequently produce code that runs without crashing yet still gives the wrong answer due to flawed logic. PropTest addresses this by borrowing an old idea from software engineering: write tests before writing the code. Specifically, the system first prompts a language model to generate short test cases that check expected properties of the answer — for instance, verifying that the result is a string, that it is one or two words long, and that it actually names a type of appliance when the question asks about appliances. These tests are then fed back into the model as additional context when generating the main solution code, steering the model toward more logically correct programs. When the generated code fails those tests at runtime, the system falls back to a standard vision-language model rather than returning a bad answer. Tested across four benchmarks covering visual question answering and object localization, PropTest consistently outperformed the baseline ViperGPT system across multiple open-source language models, with gains as large as 6 percentage points on the GQA dataset and 8 points on RefCOCO+. Notably, the researchers conducted their main experiments using freely available models like CodeLlama and Llama3 rather than proprietary APIs, addressing a reproducibility problem that has hampered comparisons in this research area.

abstract

Visual Programming has recently emerged as an alternative to end-to-end black-box visual reasoning models. This type of method leverages Large Language Models (LLMs) to generate the source code for an executable computer program that solves a given problem. This strategy has the advantage of offering an interpretable reasoning path and does not require finetuning a model with task-specific data. We propose PropTest, a general strategy that improves visual programming by further using an LLM to generate code that tests for visual properties in an initial round of proposed solutions. Our method generates tests for data-type consistency, output syntax, and semantic properties. PropTest achieves comparable results to state-of-the-art methods while using publicly available LLMs. This is demonstrated across different benchmarks on visual question answering and referring expression comprehension. Particularly, PropTest improves ViperGPT by obtaining 46.1\% accuracy (+6.0\%) on GQA using Llama3-8B and 59.5\% (+8.1\%) on RefCOCO+ using CodeLlama-34B.

details

citation

@inproceedings{koo2024proptest,

title = {PropTest: Automatic Property Testing for Improved Visual Programming},

author = {Koo, Jaywon and Yang, Ziyan and Cascante-Bonilla, Paola and Ray, Baishakhi and Ordonez, Vicente},

year = {2024},

booktitle = {Conf. on Empirical Methods in Natural Language Processing. EMNLP 2024},

url = {https://arxiv.org/abs/2403.16921},

}

automatically generated questions, main contributions and limitations of this paper

Questions this paper helps answer

- What is PropTest? PropTest is a visual programming framework that uses an LLM to generate property tests before generating executable code for visual reasoning tasks.

- What problem does PropTest address? It targets cases where generated visual programs run without syntax or runtime errors but still return logically wrong answers.

- How do property tests improve visual programming? The tests encode expected answer properties such as data type, output format, semantic category, or visual attributes, then guide code generation and help detect invalid outputs.

- What tasks does the paper evaluate? The paper evaluates visual question answering on GQA and A-OKVQA, and visual grounding on RefCOCO and RefCOCO+.

- Why is the use of open-source LLMs important? The paper emphasizes reproducible visual programming experiments using models such as Llama3 and CodeLlama, reducing dependence on closed or deprecated API models.

Main contributions

- The paper introduces automatic property test generation as a general mechanism for improving LLM-generated visual programs.

- PropTest supports both text-answer tasks and bounding-box grounding tasks by generating tests tailored to the expected output type.

- The method improves ViperGPT across multiple benchmarks and LLM backbones, including reported gains of +6.0% on GQA with Llama3-8B and +8.1% on RefCOCO+ with CodeLlama-34B.

- The work shows that generated tests can improve code quality rather than merely increasing reliance on fallback vision-language models.

- The paper contributes a more reproducible evaluation setting for visual programming by focusing on publicly available LLMs and an API-free implementation path.

Limitations and cautions

- PropTest adds an extra LLM call to generate property tests, but this is a practical tradeoff for more reliable visual programs and should become cheaper as faster code models improve.

- Different output types require different property-test prompts, which keeps the implementation explicit and also points to a clear future direction: automatically designing prompts by task.

- Generated property tests can themselves contain mistakes, but the paper analyzes this behavior and shows that the tests are usually accurate enough to improve end-to-end results.

- The framework still depends on the quality of the visual tools and APIs used by the generated programs, making it complementary to work on better tool construction and self-refinement.

- The evaluation focuses on image-based VQA and referring expression comprehension, leaving video, temporal, and causal visual reasoning as promising next applications.

How to read this result

This paper is best read as a strong and practical step toward more reliable visual programming: PropTest brings software testing ideas into multimodal reasoning, improves generated program logic across benchmarks, and does so in a reproducible setting with public LLMs.