MotionBits: Video Segmentation through Motion-Level Analysis of Rigid Bodies

News Release Summary

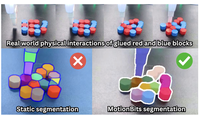

Researchers at Rice University and the University of Texas at Dallas have developed a new video segmentation system designed to identify and track individual rigid objects by analyzing how they physically move, rather than by relying on what they look like. The core problem they tackled is that existing segmentation models — including powerful foundation models like Segment Anything — carve up scenes based on visual appearance and human-defined object categories, which causes them to either split a single composite object into too many pieces or lump separately moving parts together. To address this, the team defined a new concept called a "MotionBit," grounded in rigid-body kinematics, which groups image pixels together only if they share the same spatial twist — essentially the same instantaneous rotational and translational motion — throughout a video clip. Building on that definition, they created a learning-free, graph-based algorithm that estimates local motion for sampled image points using optical flow, constructs a similarity graph weighted by kinematic consistency, and then clusters nodes into distinct rigid-body segments, using SAM 2 to clean up boundaries. To evaluate the approach, the team also assembled MoRiBo, a new hand-labeled benchmark of 349 videos spanning teleoperated robot manipulation and everyday human-object interactions. Tested against that benchmark, their method outperformed state-of-the-art video-language models and motion segmentation competitors by an average of 37.3 percentage points in mean intersection-over-union. In a practical robot demonstration, the system enabled a robot to successfully stack composite block objects into a tower in 6 out of 10 trials, while competing methods based on SAM or language-model reasoning achieved zero successes, underscoring the argument that motion-aware segmentation could be a meaningful missing piece for robots operating in cluttered, real-world environments.

abstract

Rigid bodies constitute the smallest manipulable elements in the real world, and understanding how they physically interact is fundamental to embodied reasoning and robotic manipulation. Thus, accurate detection, segmentation, and tracking of moving rigid bodies is essential for enabling reasoning modules to interpret and act in diverse environments. However, current segmentation models trained on semantic grouping are limited in their ability to provide meaningful interaction-level cues for completing embodied tasks. To address this gap, we introduce MotionBit, a novel concept that, unlike prior formulations, defines the smallest unit in motion-based segmentation through kinematic spatial twist equivalence, independent of semantics. In this paper, we contribute (1) the MotionBit concept and definition, (2) a hand-labeled benchmark, called MoRiBo, for evaluating moving rigid-body segmentation across robotic manipulation and human-in-the-wild videos, and (3) a learning-free graph-based MotionBits segmentation method that outperforms state-of-the-art embodied perception methods by 37.3\% in macro-averaged mIoU on the MoRiBo benchmark. Finally, we demonstrate the effectiveness of MotionBits segmentation for downstream embodied reasoning and manipulation tasks, highlighting its importance as a fundamental primitive for understanding physical interactions.

details

citation

@article{qianmotionbits,

title = {MotionBits: Video Segmentation through Motion-Level Analysis of Rigid Bodies},

author = {Qian, Howard H. and Ren, Kejia and Xiang, Yu and Ordonez, Vicente and Hang, Kaiyu},

journal = {arXiv preprint arXiv:2603.06846},

url = {https://arxiv.org/abs/2603.06846},

}