Gender Bias in Coreference Resolution: Evaluation and Debiasing Methods

News Release Summary

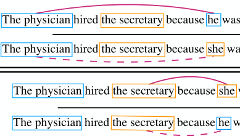

Researchers at UCLA, the University of Virginia, and the Allen Institute for Artificial Intelligence have found that widely used coreference resolution systems — software that identifies when different words in a sentence refer to the same person or thing — systematically reflect gender stereotypes in ways that could harm people in real applications. To measure the problem, the team built a new test dataset called WinoBias, consisting of 3,160 sentences that pair occupations with gendered pronouns in ways that should not, logically, influence which person the pronoun refers to — but often do. When they ran three established coreference systems through WinoBias, all three performed noticeably better when pronouns matched gender-stereotypical expectations (linking "she" to "nurse," for example) than when they ran against them, with an average performance gap of 21.1 points on the F1 scoring scale. The researchers traced much of the bias to the OntoNotes training corpus, where over 80 percent of entities referred to by gendered pronouns were male, and to word embeddings that encode stereotyped associations. To counter this, they developed a data-augmentation technique that generates a mirror-image version of the training data by swapping all male and female references, and combined it with existing methods for debiasing word embeddings. That combination effectively closed the performance gap on WinoBias without meaningfully hurting accuracy on standard benchmarks — a result that matters because coreference resolution feeds into a wide range of downstream language technologies, meaning unchecked bias in these systems can quietly propagate through many applications.

abstract

We introduce a new benchmark, WinoBias, for coreference resolution focused on gender bias. Our corpus contains Winograd-schema style sentences with entities corresponding to people referred by their occupation (e.g. the nurse, the doctor, the carpenter). We demonstrate that a rule-based, a feature-rich, and a neural coreference system all link gendered pronouns to pro-stereotypical entities with higher accuracy than anti-stereotypical entities, by an average difference of 21.1 in F1 score. Finally, we demonstrate a data-augmentation approach that, in combination with existing word-embedding debiasing techniques, removes the bias demonstrated by these systems in WinoBias without significantly affecting their performance on existing coreference benchmark datasets. Our dataset and code are available at http://winobias.org.

details

citation

@inproceedings{zhao2018gender,

title = {Gender Bias in Coreference Resolution: Evaluation and Debiasing Methods},

author = {Zhao, Jieyu and Wang, Tianlu and Yatskar, Mark and Ordonez, Vicente and Chang, Kai-Wei},

year = {2018},

booktitle = {North American Chapter of the Association for Computational Linguistics. NAACL 2018},

url = {https://arxiv.org/abs/1804.06876},

}