Gender Bias in Contextualized Word Embeddings

News Release Summary

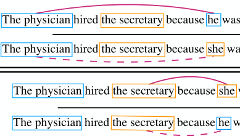

Researchers from UCLA, the University of Virginia, the Allen Institute for Artificial Intelligence, and the University of Cambridge have found that ELMo, a widely used system for generating context-aware word representations in natural language processing, encodes meaningful gender bias that flows downstream into practical applications. The team traced the problem partly to skewed training data: in the One Billion Word Benchmark corpus used to train ELMo, male pronouns appear roughly three times as often as female pronouns, and male pronouns co-occur more frequently with occupational terms regardless of whether those jobs are traditionally male or female. Using principal component analysis, the researchers showed that ELMo's internal geometry actually captures gender along two distinct dimensions — one tied to surrounding context, another tied to the word itself — and that a classifier can predict a male entity's gender from an occupation word about 14 percentage points more accurately than a female entity's, a sign that the model handles the two genders unequally. When a state-of-the-art coreference resolution system built on ELMo was tested on WinoBias, a diagnostic dataset designed to probe occupational gender stereotypes, it showed a gap of nearly 30 percentage points between its accuracy on gender-stereotypical versus gender-counter-stereotypical examples — substantially worse than a comparable system using the older, non-contextualized GloVe embeddings. The team tested two remedies: augmenting training data by swapping gendered words to create balanced examples largely eliminated the bias, while a simpler test-time approach of averaging embeddings from gender-swapped sentences only partially worked. The findings matter because contextualized embeddings like ELMo and BERT are increasingly the backbone of production NLP systems, meaning unexamined biases in these foundational components can quietly propagate into real-world tools.

abstract

In this paper, we quantify, analyze and mitigate gender bias exhibited in ELMo's contextualized word vectors. First, we conduct several intrinsic analyses and find that (1) training data for ELMo contains significantly more male than female entities, (2) the trained ELMo embeddings systematically encode gender information and (3) ELMo unequally encodes gender information about male and female entities. Then, we show that a state-of-the-art coreference system that depends on ELMo inherits its bias and demonstrates significant bias on the WinoBias probing corpus. Finally, we explore two methods to mitigate such gender bias and show that the bias demonstrated on WinoBias can be eliminated.

citation

@inproceedings{zhao2019gender,

title = {Gender Bias in Contextualized Word Embeddings},

author = {Zhao, Jieyu and Wang, Tianlu and Yatskar, Mark and Cotterell, Ryan and Ordonez, Vicente and Chang, Kai-Wei},

year = {2019},

booktitle = {North American Chapter of the Association for Computational Linguistics. NAACL 2019},

url = {https://arxiv.org/abs/1904.03310},

}